Help your students avoid the academic pitfalls of AI chatbots

In other articles, we describe some risks associated with using AI chatbots for assignments as well as how students can maintain rigorous standards with their AI-assisted academic projects.

Today’s article highlights best practices for generating meaningful search inquiries.

“Students with learning differences are facing a crisis where the allure of letting AI tools do the heavy lifting may feel too strong to pass up. Yet they may not understand how to create effective research inquiries, or recognize when a chatbot’s algorithm is incorporating poor content into its response. To make matters worse, neurodivergent learners may not appreciate the value of academic struggle, and how circumventing a healthy challenge ultimately serves to erode their confidence.”

Problem: Ineffective Use of AI Writers as a Research Tool

We live in a culture where people often outsource judgment. Learners can internalize shame for academic missteps, and as a result, they may not trust their own reasoning. By contrast, AI writing generators bring forth slick and convincing output in a matter of seconds, which students may regard as more authoritative than their own ideas about a topic.

However, AI writers have limitations. Their algorithms construct text based on the phrasing of a research question. Students can’t just type a question once and expect to be given a pertinent or adequate answer. Leveraging AI tools requires skill in formulating meaningful inquiries and follow-up questions that will generate germane output from a chatbot. However, many learners are unsure about how to conduct effective research inquiries.

Just because it comes out of a machine doesn’t mean it’s relevant.

Neurodivergent learners may not fully understand how knowledge is constructed in the information age. Students using AI writers such as ChatGPT to get ideas for a writing assignment will quickly discover the breadth of existing knowledge about a subject. However, the first response from an AI chatbot may skate on the surface of a topic, be overly general, or not fully incorporate elements that are necessary to a student’s project focus. It is inadequate as a definitive conception of what to include in the academic essay they are composing.

A high school student of mine recently needed help navigating an essay assignment on a book he had chosen from a list provided by his English teacher. He had also been given a list of literary themes he could cover in his paper. During class, his teacher suggested breaking the project into small steps, starting with answering several short questions designed to help the student establish a relationship between the novel and the literary theme.

To the teacher’s credit, he designed a learning project that modeled experimental risk-taking with an emerging technology. The teacher had not read the book, and used ChatGPT to create sample sub-prompts for the student to use. In essence, the assignment was a test-drive of the AI writer’s strengths and limitations.

During our coaching session, the student and I both attempted to identify a connection between the sub-prompts, the original essay assignment, and the novel. Regrettably, neither of us could.

While I appreciate that the teacher opted to offer learners a trailblazing educational experience, ChatGPT’s ability to comb through unthinkably huge piles of data at breakneck speed had not resulted in pertinent research questions for my student.

An internet search taught me enough about the story and its themes to suggest alternate questions that the student was then able to use to focus his essay subject. He identified quotes from the book that related to his chosen theme, and his essay was off and running.

While all was well, I was left wondering — what did the learner need that ChatGPT’s output did not provide?

I was not privy to the original queries and search terms that resulted in essay sub-prompts that had a weak link to how the literary theme played out in the book. Yet my student’s experience drove home the importance of teaching learners effective research skills, particularly when using new technologies.

ChatGPT's ability to comb through unthinkably huge piles of data at breakneck speed had not resulted in pertinent research questions for my student.

Students can use iterative queries to hone meaningful content that informs the concepts they choose to include in the design of their projects. Keeping the same window open and asking follow-up questions drives the AI writer’s algorithm to drill down and get more specific information on a subject.

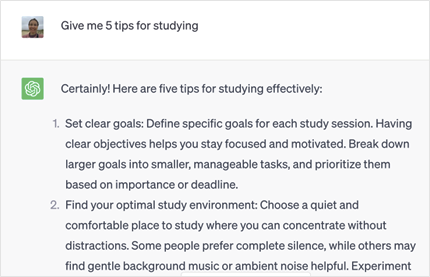

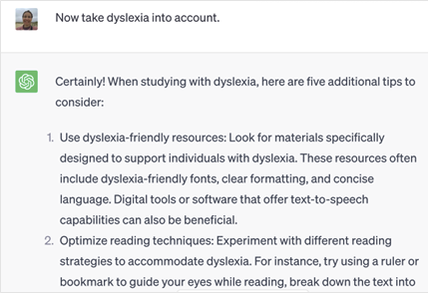

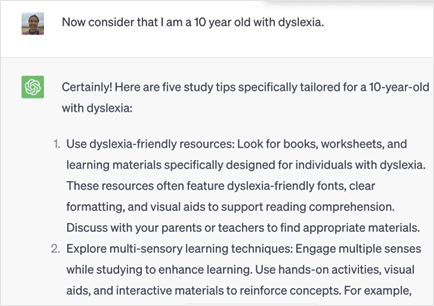

Here is an example of how to design successive prompts in ChatGPT to get increasingly more meaningful output:

Question 1

Question 2

Question 3

By incorporating more detail into follow-up questions, students can challenge the chatbot to yield more useful content. With each successive inquiry in a dialog thread, the output in that thread becomes more tailored to the information the learner is seeking.

Students don’t need to live under the false assumption that ChatGPT’s first response is an appropriate overview of the info they are beginning to research. AI writing tools can be purposed as a dialectical learning device, allowing for a Socratic investigation, where a succession of back-and-forth “conversations” allows for a series of deeper questions and answers.

Solutions and Tips: Coaches and educators must help students understand how to use AI tools such as ChatGPT to aid, rather than replace critical thinking.

- Create assignments that encourage your learner to use ChatGPT for initial research, but then hone their argument using analysis and writing skills.

- Help your student navigate AI chatbots by first creating a prompt that will generate an overview of what has been written about a subject.

- Teach learners to zero in on the specific aspects of a subject, challenge, or task that most pertain to their goals and values with respect to the assignment.

- Guide your students to refine their thinking with iterative queries that steer the chatbot’s output, resulting in increasingly relevant responses.

- In the classroom, students can discuss and analyze different versions of chatbot responses they receive from their queries.

While AI chatbots are a robust writing resource, students leveraging them for research must discern the most pertinent aspects of a topic, craft effective follow-up questions to generate a meaningful response, develop a unique angle, compose original language, and incorporate personal knowledge and specific evidence into their content. Classroom time or a tutor can help learners cultivate research habits that allow them to utilize AI tools as an asset to learning.

Is your student looking for guidance in using research technology to maximize their academic confidence? Schedule a complimentary information session.

As an executive function coach and academic tutor, I specialize in helping individuals with learning differences exceed their goals for academics, organization, and college transition.